OAuth2 for JavaScript Developers

OAuth is a staple of modern web development. It's the magic behind the "Log in with Facebook" or "Connect to Google" buttons you see everywhere. But what exactly is it, and how does it work, especially for a JavaScript developer? Let's dive in, using GitHub as our primary example.

What is OAuth?

At its core, OAuth (Open Authorization) is a protocol for authorization. It allows third-party applications to access user data without needing the user's password. Instead of users sharing their password with an app, they are granted a token that the app can use to act on their behalf. This offers several benefits; the most significant is that the app doesn't need to store user passwords, which greatly reduces potential attack vectors.

Although OAuth is often used interchangeably with OAuth2, they are distinct. OAuth 1.0, introduced in 2007, is the first version of the protocol. While it provided a solid method for consumers to access protected resources without user credentials, it had complexities, such as the need for cryptographic libraries for request signing.

Recognizing these challenges, the community introduced OAuth2 in 2012. OAuth2 simplified many aspects, eliminating the need for cryptographic signatures and emphasizing bearer tokens, which are easier to manage. OAuth2 provides flexibility with various methods of obtaining access tokens suitable for different application types.

OAuth2 Steps Explained Using GitHub

To explain how OAuth2 works, it's best to describe the entire process step-by-step. It's challenging to do this without code, so we'll reference our starter.dev backend showcase, which illustrates integrating a serverless backend with GitHub as an OAuth2 provider.

Although the steps below are tailored to GitHub's OAuth2 implementation, it's important to note that the core flow is consistent across most OAuth2 services. For most of these services, you'll only need to adjust elements like URLs, query parameter names, and so on.

Also, please note that our starter.dev backend showcase is designed to be deployed on Netlify. As such, all the API endpoints run as Netlify functions. When running the app locally, you must prepend the URL path with /.netlify/functions/server to access these endpoints. This step is unnecessary for the deployed version; API endpoints can be accessed directly (e.g., /api/auth/signin instead of /.netlify/functions/server/api/auth/signin) due to the redirects configured in the netlify.toml file.

Step 0: Creating the App on GitHub

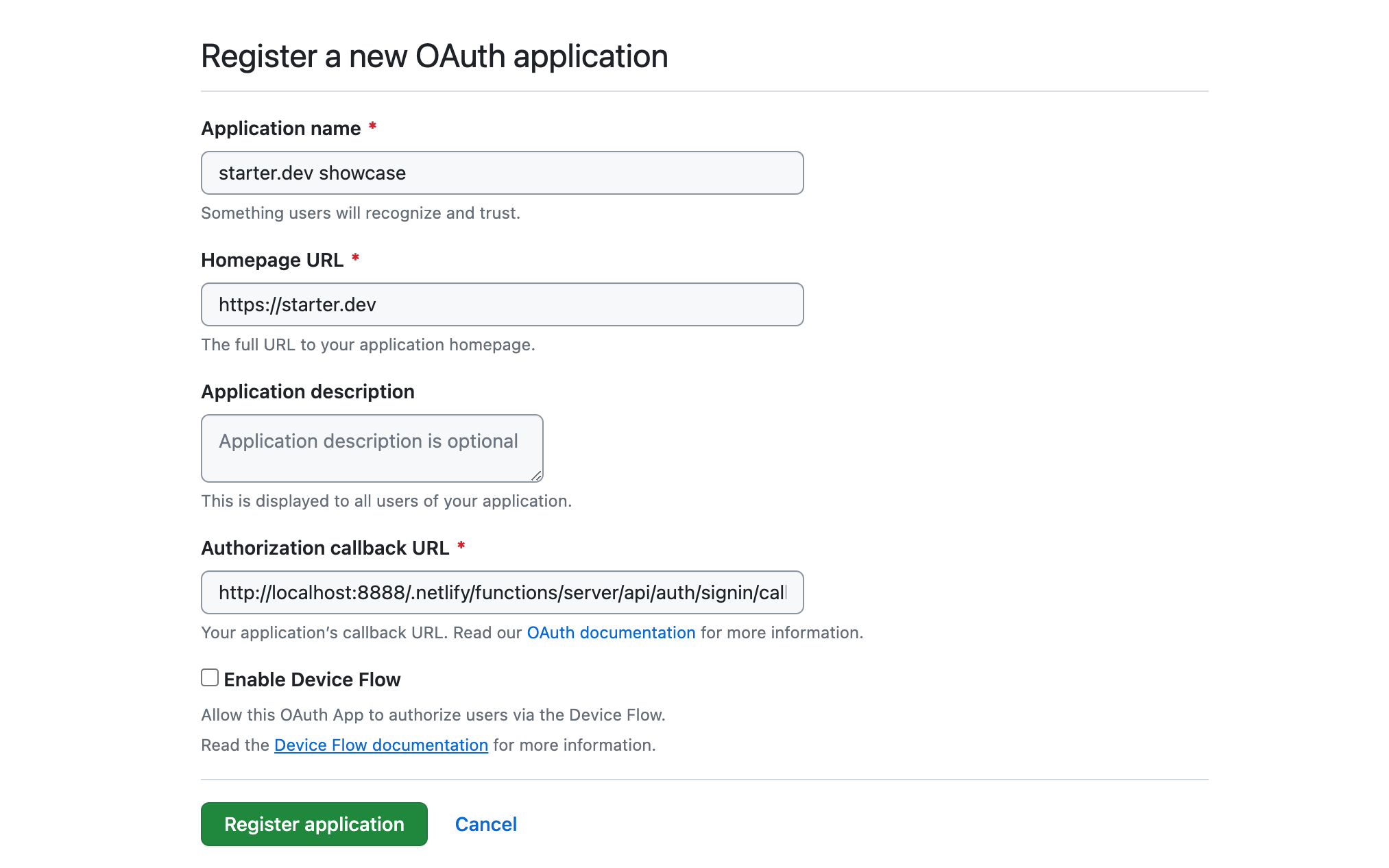

The first step is to create your app with the provider. For GitHub, this is done on the Settings / Developer Settings / OAuth Apps page. This step is essential so that the provider can identify your app and possibly manage service-level parameters, like rate limits.

In the screenshot above, when registering our app on GitHub, the authorization callback URL is a local URL. This is suitable for local development. However, in production, it should be a public API endpoint accessible to GitHub, like an API endpoint on Netlify.

After registering the app, you'll receive a public client ID for your app and will need to generate a client secret (which should stay private). The client secret, used alongside the client ID, helps authenticate your application when trading an authorization code for an access token. Essentially, the client secret assures GitHub that the request for an access token genuinely comes from your app and not a malicious actor.

Step 1: App Sends User to GitHub for Authorization

The first step in the OAuth2 flow typically involves the user clicking a "Log in with GitHub" button on our web app or something similar. This action redirects the user from the web app to the OAuth2 provider's page (in this case, GitHub). For GitHub, the base URL for such a page is https://github.com/login/oauth/authorize, followed by query parameters that provide more details about the app. These parameters usually include the client ID — a mandatory identifier for your app — and several optional parameters, such as the redirect URI (where GitHub will send the user back) and scope (defining the permissions you're requesting).

In our starter.dev example, clicking the "Log in with GitHub" button should lead the user to the /api/auth/signin route in our app. This route generates the GitHub authorization URL, populating it with all the necessary query parameters, and then redirects the user to that URL using a standard HTTP 303 redirect.

// src/lib/signin-url.js

export default (req, res) => {

const { redirect_url } = req.query;

const state = Buffer.from(redirect_url).toString('base64');

const scopes = 'scope=user&scope=read:org';

const params = new URLSearchParams({

client_id: process.env.GITHUB_CLIENT_ID ?? '',

redirect_uri: `${process.env.SERVER_BASE_URL}/api/auth/signin/callback`,

state,

});

res.redirect(

303,

`${process.env.GITHUB_OAUTH_URL}/authorize?${scopes}&${params.toString()}`,

);

};

Step 2: User Grants Permission

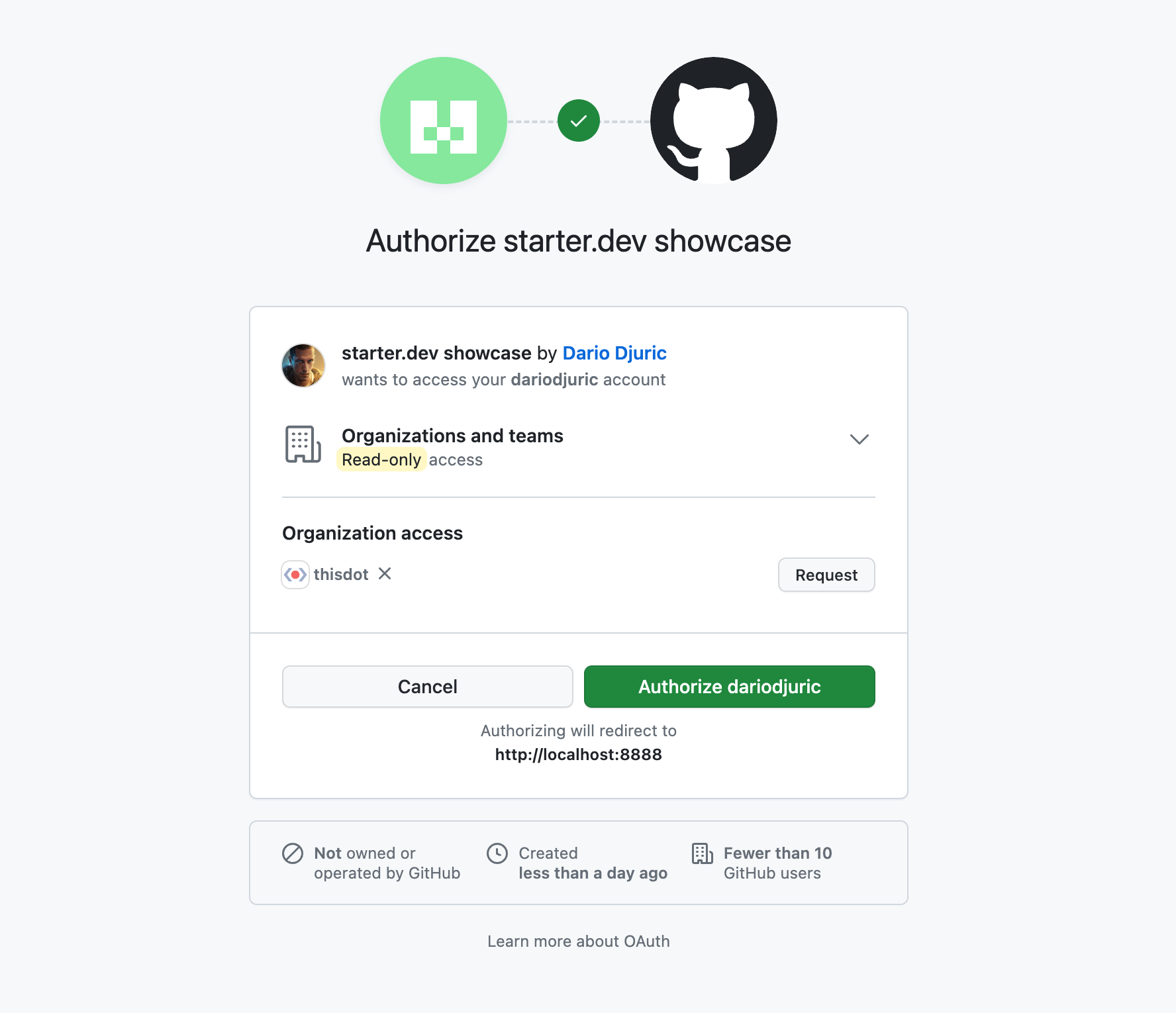

In this step, the user arrives at the provider's authorization page and sees a prompt asking if they allow your app to access the specified data. The user can either approve or decline this request.

For our scenario, this page would appear as follows:

If either the cancel or authorize button is clicked, GitHub will invoke the callback URL specified when registering the app on GitHub. However, if the authorize button is clicked, GitHub will also send a one-time-use code query parameter that can be used to get the access token.

Step 3: App Receives an Authorization Code

After the user grants permission, GitHub redirects them to the specified callback URL. Attached to this URL is a one-time-use code query parameter.

// src/lib/access-token.js

export default async (req, res) => {

// Get encoded state and code

const { state, code } = req.query;

try {

if (!state) {

throw new Error('No state provided.');

}

if (!code) {

throw new Error('No code provided.');

}

// Get access token and redirect

}

};

Step 3: App Exchanges Authorization Code for Access Token

With this code, the app needs to make a POST request to the GitHub API to exchange the code for an access token. As shown below, the app sends the client ID, client secret, and the one-time code to GitHub and receives the access token in return. The access token should then be persisted in a store (such as Redis) or sent to the frontend as a cookie, as shown below:

// src/lib/access-token.js

export default async (req, res) => {

// Get encoded state and code

try {

const result = await fetch(`${process.env.GITHUB_OAUTH_URL}/access_token`, {

method: 'POST',

headers: {

'Content-Type': 'application/json',

Accept: 'application/json',

},

body: JSON.stringify({

client_id: process.env.GITHUB_CLIENT_ID ?? '',

client_secret: process.env.GITHUB_CLIENT_SECRET ?? '',

code: code,

}),

});

const data = await result.json();

const accessToken = data['access_token'];

const redirectUrl = Buffer.from(state, 'base64').toString();

res

.cookie(ACCESS_TOKEN_COOKIE, accessToken, {

sameSite: 'None',

path: '/',

maxAge: 604800000,

httpOnly: true,

secure: true,

})

.redirect(303, `${redirectUrl}`);

} catch (err) {

return res.status(401).send({

error: err,

message: 'Authentication failed. Access token could not be retrieved.',

});

}

};

Step 4: App Accesses User Data with the Access Token

Once you have the access token, you can use it to make authorized API requests on behalf of the user. All the app needs to do is include the access token in the Authorization header.

fetch('https://api.github.com/user', {

headers: {

'Authorization': `Bearer ${accessToken}`

}

});

And that is all. As long as the access token is valid, you can access any of the API services that were granted by the user in the authorization step.

Conclusion

OAuth2, though appearing complex initially, provides a strong method for authentication and authorization. For JavaScript developers, knowing its process helps in easy third-party integrations, giving users a safe way to access different services. Whether using GitHub or another platform, the main concepts are the same, allowing for better web applications.

Check out our starter.dev backend showcase for the full code! There's also an associated StackBlitz project if you want to play with it.